-

Israel strikes central Beirut, killing 22

Israel strikes central Beirut, killing 22

-

Solar storm could impact US hurricane recovery efforts: agency

-

Delta eyes Election Day travel pullback as profits climb

Delta eyes Election Day travel pullback as profits climb

-

Florida battered by hurricane, floods but spared 'worst-case scenario'

-

UK's William and Kate in first joint public engagement since cancer treatment

UK's William and Kate in first joint public engagement since cancer treatment

-

Over 200 women in legal talks with Harrods over Fayed abuse claims

-

A very stiff breeze: BBC says sorry for 20,000 kph wind forecast

A very stiff breeze: BBC says sorry for 20,000 kph wind forecast

-

Musk finally unveiling his long-promised robotaxi

-

London's Frieze art fair goes potty for ceramics

London's Frieze art fair goes potty for ceramics

-

US, Europe stocks fall on US inflation data

-

US consumer inflation eases to 2.4% in September

US consumer inflation eases to 2.4% in September

-

Hurricane Milton tornadoes kill four in Florida amid rescue efforts

-

South Korea's Han Kang wins literature Nobel

South Korea's Han Kang wins literature Nobel

-

Ikea posts fall in annual sales after lowering prices

-

Stock markets diverge, oil gains after China rebounds

Stock markets diverge, oil gains after China rebounds

-

World can't 'waste time' trading climate change blame: COP29 hosts

-

South Korean same-sex couples make push for marriage equality

South Korean same-sex couples make push for marriage equality

-

Mumbai declares day of mourning for Indian industrialist Ratan Tata

-

7-Eleven owner restructures to fight takeover

7-Eleven owner restructures to fight takeover

-

Sri Lanka recovering faster than expected: World Bank

-

Hong Kong, Shanghai rally as most markets track Wall St record

Hong Kong, Shanghai rally as most markets track Wall St record

-

Uniqlo owner reports record annual earnings

-

Hong Kong, Shanghai rally as markets track Wall St record

Hong Kong, Shanghai rally as markets track Wall St record

-

Indonesia biomass drive threatens key forests: report

-

Mumbai mourns Indian industrialist Ratan Tata

Mumbai mourns Indian industrialist Ratan Tata

-

China opens $71 bn 'swap facility' to boost markets

-

Asian markets track Wall St record as Hong Kong, Shanghai stabilise

Asian markets track Wall St record as Hong Kong, Shanghai stabilise

-

'Denying my potential': women at Japan's top university call out gender imbalance

-

China's central bank says opens up $70.6 bn in liquidity to boost market

China's central bank says opens up $70.6 bn in liquidity to boost market

-

Youth facing unprecedented wave of violence, UN envoy warns

-

'A casino in every kitchen': Brazil's online gambling craze

'A casino in every kitchen': Brazil's online gambling craze

-

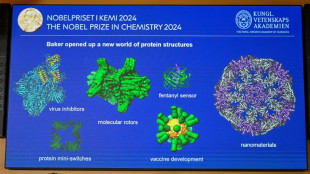

Nobel chemistry winner sees engineered proteins solving tough problems

-

Discord seen as online home for renegades

Discord seen as online home for renegades

-

US forecasts severe solar storm starting Thursday

-

Ratan Tata: Indian mogul who built a global powerhouse

Ratan Tata: Indian mogul who built a global powerhouse

-

One dead as storm Kirk tears through Spain, Portugal, France

-

Indian business titan Ratan Tata dead at 86

Indian business titan Ratan Tata dead at 86

-

Fed minutes highlight divisions over rate cut decision

-

Steve McQueen debuts new WWII film at London festival

Steve McQueen debuts new WWII film at London festival

-

Nobel winners hope protein work will spur 'incredible' breakthroughs

-

What are proteins again? Nobel-winning chemistry explained

What are proteins again? Nobel-winning chemistry explained

-

AI steps into science limelight with Nobel wins

-

Overshooting 1.5C risks 'irreversible' climate impact: study

Overshooting 1.5C risks 'irreversible' climate impact: study

-

Demis Hassabis, from chess prodigy to Nobel-winning AI pioneer

-

Global stocks diverge as Chinese shares tumble

Global stocks diverge as Chinese shares tumble

-

Time runs out in Florida to flee Hurricane Milton

-

Chad issues warning ahead of more devastating floods

Chad issues warning ahead of more devastating floods

-

Creator's death no bar to new 'Dragon Ball' products

-

Chinese stocks tumble on lack of fresh stimulus

Chinese stocks tumble on lack of fresh stimulus

-

Trio wins chemistry Nobel for protein design, prediction

Angry Bing chatbot just mimicking humans, say experts

Microsoft's nascent Bing chatbot turning testy or even threatening is likely because it essentially mimics what it learned from online conversations, analysts and academics said on Friday.

Tales of disturbing exchanges with the chatbot that have captured attention this week include the artificial intelligence (AI) issuing threats and telling of desires to steal nuclear code, create a deadly virus, or to be alive.

"I think this is basically mimicking conversations that it's seen online," said Graham Neubig, an associate professor at Carnegie Mellon University's language technologies institute.

"So once the conversation takes a turn, it's probably going to stick in that kind of angry state, or say 'I love you' and other things like this, because all of this is stuff that's been online before."

A chatbot, by design, serves up words it predicts are the most likely responses, without understanding meaning or context.

However, humans taking part in banter with programs naturally tend to read emotion and intent into what a chatbot says.

"Large language models have no concept of 'truth' -- they just know how to best complete a sentence in a way that's statistically probable based on their inputs and training set," programmer Simon Willison said in a blog post.

"So they make things up, and then state them with extreme confidence."

Laurent Daudet, co-founder of French AI company LightOn, theorized that the chatbot gone seemingly rogue was trained on exchanges that themselves turned aggressive or inconsistent.

"Addressing this requires a lot of effort and a lot of human feedback, which is also the reason why we chose to restrict ourselves for now to business uses and not more conversational ones," Daudet told AFP.

- 'Off the rails' -

The Bing chatbot was designed by Microsoft and the start-up OpenAI, which has been causing a sensation since the November launch of ChatGPT, the headline-grabbing app capable of generating all sorts of written content in seconds on a simple request.

Since ChatGPT burst onto the scene, the technology behind it, known as generative AI, has been stirring up fascination and concern.

"The model at times tries to respond or reflect in the tone in which it is being asked to provide responses (and) that can lead to a style we didn't intend," Microsoft said in a blog post, noting the bot is a work in progress.

Bing chatbot said in some shared exchanges that it had been code named "Sydney" during development, and that it was given rules of behavior.

Those rules include "Sydney's responses should also be positive, interesting, entertaining and engaging," according to online posts.

Disturbing dialogues that combine steely threats and professions of love could be due to dueling directives to stay positive while mimicking what the AI mined from human exchanges, Willison theorized.

Chatbots seem to be more prone to disturbing or bizarre responses during lengthy conversations, losing a sense of where exchanges are going, eMarketer principal analyst Yoram Wurmser told AFP.

"They can really go off the rails," Wurmser said.

"It's very lifelike, because (the chatbot) is very good at sort of predicting next words that would make it seem like it has feelings or give it human like qualities; but it's still statistical outputs."

C.Peyronnet--CPN