-

Wall Street stocks hit fresh records as oil prices slide

Wall Street stocks hit fresh records as oil prices slide

-

Strike-hit Boeing leaves experts puzzled by strategy

-

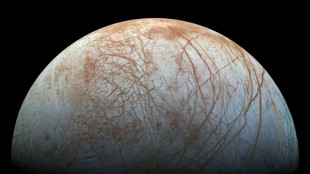

NASA launches probe to study if life possible on icy Jupiter moon

NASA launches probe to study if life possible on icy Jupiter moon

-

EVs seek to regain sales momentum at Paris Motor Show

-

NASA probe Europa Clipper lifts off for Jupiter's icy moon

NASA probe Europa Clipper lifts off for Jupiter's icy moon

-

'Unsustainable' housing crisis bedevils Spain's socialist govt

-

Stocks shrug off China disappointment but oil slides

Stocks shrug off China disappointment but oil slides

-

Stocks diverge, oil retreats as China disappoints markets

-

Trio wins economics Nobel for work on wealth inequality

Trio wins economics Nobel for work on wealth inequality

-

Ex-Stasi officer jailed over 1974 Berlin border killing

-

Shanghai stocks gain after stimulus briefing as markets rally

Shanghai stocks gain after stimulus briefing as markets rally

-

Shanghai stocks gain after stimulus briefing as Asian markets rally

-

Nearly 90, but opera legend Kabaivanska is still calling tune

Nearly 90, but opera legend Kabaivanska is still calling tune

-

With inflation down, ECB eyes faster tempo of rate cuts

-

Is life possible on a Jupiter moon? NASA goes to investigate

Is life possible on a Jupiter moon? NASA goes to investigate

-

Ex-Stasi officer faces verdict over 1974 Berlin border killing

-

Role of government, poverty research tipped for economics Nobel

Role of government, poverty research tipped for economics Nobel

-

In milestone, SpaceX 'catches' megarocket booster after test flight

-

In a first, SpaceX 'catches' megarocket booster after test flight

In a first, SpaceX 'catches' megarocket booster after test flight

-

Bangladeshi Hindus shrug off attack worries to celebrate festival

-

Ubisoft fears assassin's hit over falling sales

Ubisoft fears assassin's hit over falling sales

-

Vietnam, China hold talks on calming South China Sea tensions

-

SpaceX will try to 'catch' giant Starship rocket shortly before landing

SpaceX will try to 'catch' giant Starship rocket shortly before landing

-

Japan's former empress Michiko discharged after surgery: reports

-

Japan's former empress Michiko discharged after surgey: reports

Japan's former empress Michiko discharged after surgey: reports

-

'Little Gregory' murder haunts France 40 years on

-

Tariffs, tax cuts, energy: What is in Trump's economic plan?

Tariffs, tax cuts, energy: What is in Trump's economic plan?

-

Amazon wants to be everything to everyone

-

Jewish school in Canada hit by gunfire for second time

Jewish school in Canada hit by gunfire for second time

-

With medical report Harris seeks to play health card against Trump

-

China-EU EV tariff talks in Brussels end with 'major differences': Beijing

China-EU EV tariff talks in Brussels end with 'major differences': Beijing

-

Buried Nazi past haunts Athens on liberation anniversary

-

Harris to release medical report confirming fitness for presidency: campaign

Harris to release medical report confirming fitness for presidency: campaign

-

Nobel prize a timely reminder, Hiroshima locals say

-

China offers $325 bn in fiscal stimulus for ailing economy

China offers $325 bn in fiscal stimulus for ailing economy

-

Small Quebec company dominates one part of NHL hockey: jerseys

-

Boeing to cut 10% of workforce as it sees big Q3 loss

Boeing to cut 10% of workforce as it sees big Q3 loss

-

Want to film in Paris? No sexism allowed

-

US, European markets rise as investors weigh rates, earnings

US, European markets rise as investors weigh rates, earnings

-

In Colombia, children trade plastic waste for school supplies

-

JPMorgan Chase profits top estimates, bank sees 'resilient' US economy

JPMorgan Chase profits top estimates, bank sees 'resilient' US economy

-

Little progress at key meet ahead of COP29 climate summit

-

'Party atmosphere': Skygazers treated to another aurora show

'Party atmosphere': Skygazers treated to another aurora show

-

Kyrgyzstan opens rare probe into glacier destruction

-

European Mediterranean states discuss Middle East, migration

European Mediterranean states discuss Middle East, migration

-

Thunberg leads pro-Palestinian, climate protest in Milan

-

Stock markets diverge before China weekend briefing

Stock markets diverge before China weekend briefing

-

EU questions shopping app Temu over illegal products risk

-

Han Kang's books sell out in South Korea after Nobel win

Han Kang's books sell out in South Korea after Nobel win

-

Shanghai markets sink ahead of briefing on mixed day for Asia

Will AI really destroy humanity?

The warnings are coming from all angles: artificial intelligence poses an existential risk to humanity and must be shackled before it is too late.

But what are these disaster scenarios and how are machines supposed to wipe out humanity?

- Paperclips of doom -

Most disaster scenarios start in the same place: machines will outstrip human capacities, escape human control and refuse to be switched off.

"Once we have machines that have a self-preservation goal, we are in trouble," AI academic Yoshua Bengio told an event this month.

But because these machines do not yet exist, imagining how they could doom humanity is often left to philosophy and science fiction.

Philosopher Nick Bostrom has written about an "intelligence explosion" he says will happen when superintelligent machines begin designing machines of their own.

He illustrated the idea with the story of a superintelligent AI at a paperclip factory.

The AI is given the ultimate goal of maximising paperclip output and so "proceeds by converting first the Earth and then increasingly large chunks of the observable universe into paperclips".

Bostrom's ideas have been dismissed by many as science fiction, not least because he has separately argued that humanity is a computer simulation and supported theories close to eugenics.

He also recently apologised after a racist message he sent in the 1990s was unearthed.

Yet his thoughts on AI have been hugely influential, inspiring both Elon Musk and Professor Stephen Hawking.

- The Terminator -

If superintelligent machines are to destroy humanity, they surely need a physical form.

Arnold Schwarzenegger's red-eyed cyborg, sent from the future to end human resistance by an AI in the movie "The Terminator", has proved a seductive image, particularly for the media.

But experts have rubbished the idea.

"This science fiction concept is unlikely to become a reality in the coming decades if ever at all," the Stop Killer Robots campaign group wrote in a 2021 report.

However, the group has warned that giving machines the power to make decisions on life and death is an existential risk.

Robot expert Kerstin Dautenhahn, from Waterloo University in Canada, played down those fears.

She told AFP that AI was unlikely to give machines higher reasoning capabilities or imbue them with a desire to kill all humans.

"Robots are not evil," she said, although she conceded programmers could make them do evil things.

- Deadlier chemicals -

A less overtly sci-fi scenario sees "bad actors" using AI to create toxins or new viruses and unleashing them on the world.

Large language models like GPT-3, which was used to create ChatGPT, it turns out are extremely good at inventing horrific new chemical agents.

A group of scientists who were using AI to help discover new drugs ran an experiment where they tweaked their AI to search for harmful molecules instead.

They managed to generate 40,000 potentially poisonous agents in less than six hours, as reported in the Nature Machine Intelligence journal.

AI expert Joanna Bryson from the Hertie School in Berlin said she could imagine someone working out a way of spreading a poison like anthrax more quickly.

"But it's not an existential threat," she told AFP. "It's just a horrible, awful weapon."

- Species overtaken -

The rules of Hollywood dictate that epochal disasters must be sudden, immense and dramatic -- but what if humanity's end was slow, quiet and not definitive?

"At the bleakest end our species might come to an end with no successor," philosopher Huw Price says in a promotional video for Cambridge University's Centre for the Study of Existential Risk.

But he said there were "less bleak possibilities" where humans augmented by advanced technology could survive.

"The purely biological species eventually comes to an end, in that there are no humans around who don't have access to this enabling technology," he said.

The imagined apocalypse is often framed in evolutionary terms.

Stephen Hawking argued in 2014 that ultimately our species will no longer be able to compete with AI machines, telling the BBC it could "spell the end of the human race".

Geoffrey Hinton, who spent his career building machines that resemble the human brain, latterly for Google, talks in similar terms of "superintelligences" simply overtaking humans.

He told US broadcaster PBS recently that it was possible "humanity is just a passing phase in the evolution of intelligence".

U.Ndiaye--CPN